Features

Ingest

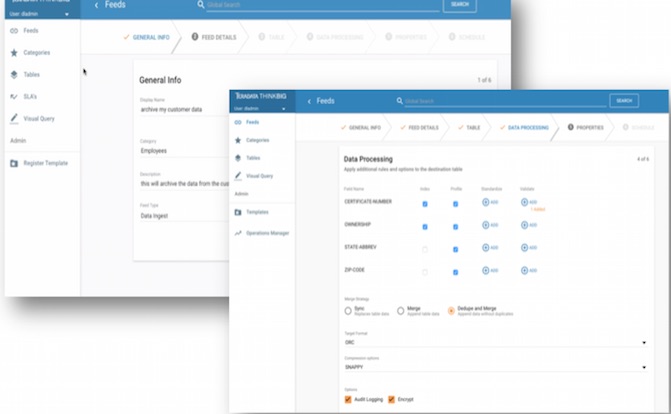

Self-service data ingest with data cleansing, validation, and automatic profiling.

Kylo can connect to most sources and infer schema from common data formats. Kylo's default ingest workflow moves data from source to Hive tables with advanced configuration options around field-level validation, data protection, data profiling, security, and overall governance.

Using Kylo's pipeline template mechanism, IT can extend Kylo's capabilities to connect to any source, any format, and load data into any target in a batch or streaming pattern.

READ FAQWATCH VIDEO

Prepare

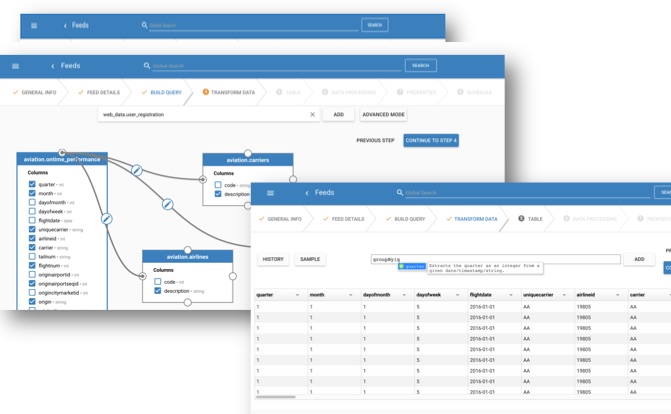

Wrangle data with visual sql and an interactive transform through a simple user interface.

WATCH VIDEO

Discover

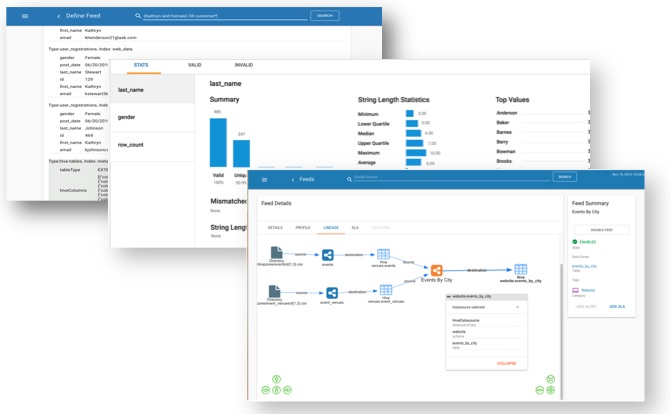

Search and explore data and metadata, view lineage, and profile statistics.

WATCH VIDEO

Monitor

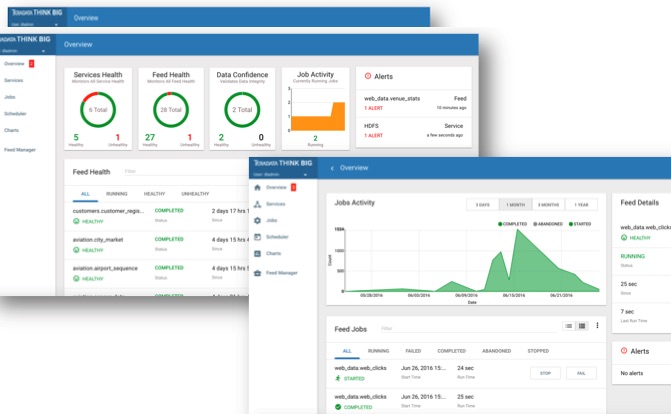

Monitor health of feeds and services in the data lake. Track SLAs and troubleshoot performance.

WATCH VIDEO

Design

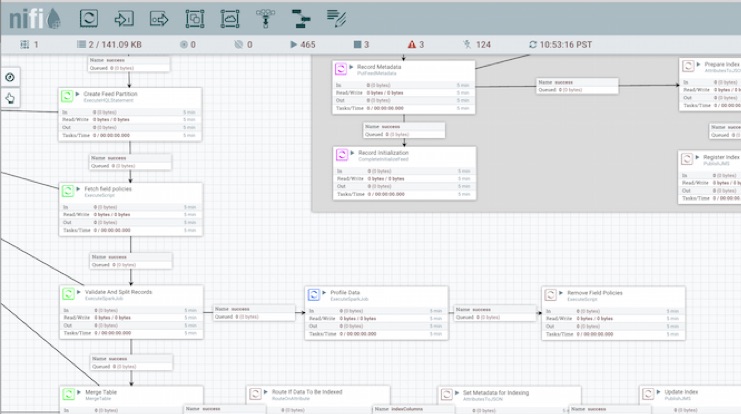

Design batch or streaming pipeline templates in Apache NiFi and register with Kylo to enable user self-service.

Designers develop and test new pipelines in Apache NiFi and register templates with Kylo determining what properties users are allowed to configure when creating feeds. This embodies the principle of write-once-use-many and enables data owners instead of engineers to create new feeds while IT retains control over the underlying dataflow patterns.

Kylo adds a suite of NiFi processors for Spark, Sqoop, Hive, and special purpose data lake primitives that provide additional capabilities.

WATCH VIDEO

Who uses Kylo?

Airline

2 companies of top 15 global brands

Insurance

2 companies of top 10 US brands

Telecommunications

2 companies of top 10 European brands

Financial Services

1 company of top 5 global brands

Banking

2 companies of top 5 global brands

Retail and Consumer Goods

2 companies of top 10 global brands